Active GUI element

Static GUI element

Code

WPS object

File/Path

Command line

Entry-field content

[Key combination]

Virtual memory technique

Virtual memory is a method of overcommitting memory: using more memory addresses than are physically available. This article explains how the virtual memory manager of OS/2 and the MEMMAN variable in CONFIG.SYS make use of it.

- Overlay Technique

- Virtual Memory Technique

- The Protected Mode of the Intel Processor

- Virtual Memory and Money Compared

- The Lazy Memory Manager of OS/2

- Advantages of Lazy Commit

- The Working Set Concept

- MEMMAN=SWAP,PROTECT,COMMIT

- MEMMAN=NOSWAP,PROTECT

- Virtual Address Space under OS/2

- The System Arena

- The shared arena

- The Private Arena

- The Free Pool of Memory

- The High Memory Arenas

- Out of Memory Errors on Current OS2 systems

- More Reading

Overlay Technique

Before virtual memory was introduced programmers had two choices. They could write programs that fitted in physical memory, or they could use an overlay technique that swapped pieces (segments) of the program in and out of physical (primary) memory from an I/O device like tape or disk (secondary memory). The swapping was done by the program itself. With only 4 KiB of memory programmers did not want the overhead of an operating system.

The development of virtual memory by a group of researchers at the University of Manchester in Manchester, England (Fotheringham 1961 [1]; Kilburn et al 1962 [2]) was in fact an automation of this overlay technique. When the swapping of memory segments was delegated to the memory manager of the operating system, all programs benefitted from it.

Virtual Memory Technique

Virtual memory technique makes use of the fact that most processors can internally address much more memory than the computer really has.

The Intel 80286 processor supported externally 16 MiB (2^24) of physical memory via 24 address lines along with the 16 bit PC/AT data bus, but handled internally 1 GiB of virtual memory. The fully 32 bit Intel 386(DX) processor addressed externally 4 GiB (2^32) of memory on a 32 bit data bus, but internally 64 TiB of virtual memory. But 32 bit hardware was expensive. Its successor, the 386SX, got again a 16-bit data bus and a 24-bit address bus to support the more realistic 16 MiB of 16 bit OS/2 1.0 and Windows 3.x.

With virtual memory user programs and the operating system could benefit from the large range of internal addresses of the processor (virtual address space). The operating system could give them to user programs almost for free. But as processors only can work with code and data that are in physical memory, the operating system had to resolve accessed internal addresses to real memory addresses on the bus. What did not fit in physical memory was discarded or swapped (aka: paged) to disk.

To help the operating system processors soon got a hardware based Memory Management Unit (MMU) that mapped the physical and virtual memory addresses of resident programs. They were closely integrated with facilities like memory protection, caches, and bus arbitration.

Virtual memory techniques resembled the overlay technique of 4 KiB programmers who executed big programs by loading new code and data endlessly from tape. But in the overlay technique the programmer decided when the swapping would occur. If the swapping of a memory segment was wrongly timed, the program would trap [terminate spontaneously].

But the memory manager of an multitasking operating system had no idea of the program's intentions. So it handled virtual memory requests and just had to make sure that the accessed virtual memory addresses were mapped in a timely manner and loaded into physical memory (on demand paging). This was a matter of trial and error, helped by some best guess algorithms.

Paging required a fast Direct Access Storage Device (DASD) for memory backup and paging tables to keep track of it. Virtual memory requests were honoured by allocating new virtual memory addresses. When the programs approached them, OS/2 mapped them to physical memory. A pool of free physical memory was always kept available by periodically storing the Least Recently Used (LRU) segments (16 bits OS/2) or 4 KiB Page Frames (32 bits OS/2) to a reserved place on disk (swapping out). They were temporarily archived in swapper.dat in such a way that they could be retrieved later somewhere in real memory, but always mapped to the same virtual address where the program expected them (swapping in).

The Protected Mode of the Intel Processor

In protected mode the operating system presented the programmer a range of usable virtual memory addresses, that could be either segmented (consisting of multiple address sections) or flat (one contiguous space).

16 bit OS/2 1.0 used the segmented 16:16 (selector:offset) scheme of the Intel 80286 processor to address up to 16 MiB of physical memory. They were swapped as 64 KiB segments between memory and disk.

32 bit OS/2 2.0 used the flat (linear) 0:32 protected mode addressing scheme of the Intel 80386 processor to address 4 GiB per segment. It also used the more advanced paging mechanism of the Intel 386 processor that divided the 4 GiB into 4KiB page frames instead of 64 KiB segments.

In fact OS/2 2.0 used three segments to internally address the Intel 86386 processor. But only the in privileged mode running kernel used segments 1 and 2. So protected mode programmers only needed to refer to the offset address of the first segment (0). This seemingly flat memory model was easier to use.

But to comply with the needs of 16 bits OS/2 applications and libraries, only the first 512 MiB of the 4 GiB of virtual memory space of the first segment (#0) were given to 32 bit user programs. This virtual address limit of 512 MiB had to do with limitations of the Local Descriptor Table (LDT) that were expected by the 64 KiB segments using 16 bit programs.

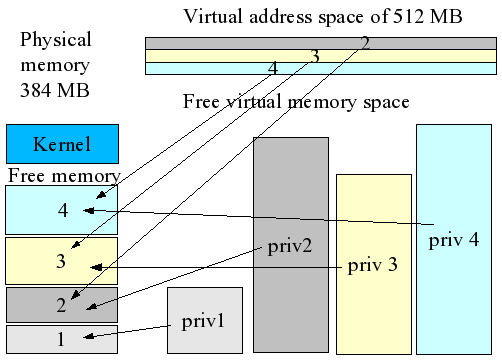

In the example below four protected mode programs use virtual memory addresses up to their virtual address limit of 512 MiB. The lower blocks of virtual memory are for private use and the upper ones are shared. But less then a third of their virtual memory could be mapped mapped in RAM (left). So two thirds had to be in the page file as soon it was committed (mapped).

Figure 1. Protected mode usage

Notice that programs 2 and 4 could not fit in RAM when they had to share it with the kernel. But as long as their active part (working set) is in memory, they run. So, from the user's perspective, virtual memory is a way to save money on physical memory. From the programmers perspective overcommitting memory allowed their programs and data to grow.

Using virtual memory addresses had another advantage. Real mode programs could write into any physical memory location. A program could ask for some extra memory. The memory manager could allocate some physical memory addresses from its free pool, but nothing withheld the program to write accidentally or even purposefully (virus) to another location, even the kernel. And without safeguards, TRAP BOOM, because the memory manager / operating system was not involved. A program sends its instructions to the processor, and not to an interpreter or debugger in the operating system. So harm was easily done.

To resolve this problem in hardware, the Intel 286 processor got a protected mode to support multiprogramming using virtual memory. Programs running in protected mode did not write directly to physical memory addresses, but only indirectly by using virtual memory addresses. And as the virtual addresses needed to be translated to physical addresses, the operating system had an opportunity to check that the requested addresses were correct. The valid addresses were laid down by the operating system in the Local Descriptor Table of each process and in the Global Descriptor Table for the whole system.

In the example above 4 processes and the kernel use 384 MiB of RAM. But all running OS/2 programs could have up to 512 MiB of virtual memory, the kernel even much more. Priv 1-4 are the private virtual memory addresses that programs 1-4 were using. They seem to overlap and indeed they do, but in a time-sharing environment only one program (and its LDT) can at any moment have the attention of the processor, just as only the active program window listens to the keyboard or mouse in the PM. So when process 1 is active (has its time-slices) the private memory of processes 2-4 are virtually non existent. Only their shared memory addresses (S2-4) are visible to process 1 in the protected mode.

When a protected mode program tried to access memory locations that did not belong to the process' working set, it was immediately interrupted by the processor. The operating system got an hardware interrupt (General Protection Fault) and could decide what to do: Shut down the offending program, commit not yet allocated virtual memory as real memory, or shut down the system as a whole.

When a protected mode program threatened to overwrite another's programs resident memory, OS/2 decided to close the program immediately (SYS3175). When a less supervised driver overwrote protected memory, a trap error halting OS/2 would occur. This in contrast to the less stringent 16 bit, and even 32 bit Windows versions, that permitted crippled systems to keep on running. The interrupted Windows user only needed to press a key to get the the famous Blue Screen Of Death (of course it should have been red).

Virtual Memory and Money Compared

The concept of virtual memory is not easy to understand. But if you compare memory with money, and you can imagine that money can exist virtual in the form of “promises” (the credit in your bank account) and as “real” (gold coins in your hand), you could compare the Memory Manager with your bank. Just like a bank the OS/2 Memory Manager must give programs (its customers) the impression that they can cash all their credit money at any time, while in fact the bank has only a small amount of money actually available (liquid).

When all bank customers ask for their promised money at once, you can expect waiting rows and hyperinflation. When only a few OS/2 programs asked for 100 MiB of their promised virtual memory, most systems would slow down terribly and crash. Indeed, the dynamics of disk thrashing looks like a financial crisis. Virtual memory would be worth little as a medium of exchange with the processor, just like your credit account would not impress your business partners. So how does OS/2 handle the hundreds of megabytes of promised virtual memory on systems with only 4-16 MiB of physical memory?

The Lazy Memory Manager of OS/2

The Memory Manager of 32 bit OS/2 2.0 promised all protected mode programs up to 480 MiB of virtual memory

in 4,096-byte chunks (pages). 32 MiB were given to shared protected system

DLLs with

MEMMAN=SWAP,PROTECT. But when

a program that only used 8 KiB at startup asked for the first 6 MiB of his promised memory, Memory Manager (MemMan)

would notice the request, just say “okay”, but do nothing until the program really touched it. Just like a

real manager MemMan realized the danger of acting too early because MemMan had to deal with the whole system.

On systems with 8 MiB physical RAM the physical memory would mostly be occupied by the system (including the filesystem caches), PM and the WPS, so the promised 6 MiB was only theoretically (virtually) available. It could merely be disposed of partly and with a major memory crisis resulting in a slow and possibly unresponsive WPS. But if MemMan waited, time was on its side because the demanding program would soon be pre-emptively interrupted by the OS/2 scheduler. Those things happen in an multiprogramming environment. Time and memory were also needed by other programs. So MemMan's wait and see policy was not a bad idea.

MemMan also did not reserve the promised 6 MiB in the OS/2 page file. Committing virtual memory gratis costs some

real memory as bigger page tables must be held in RAM. And writing 6 MiB in the

swapper.dat would give a major system

delay because of the relative slowness of hard disks. It would result in an “out of memory” error if

swapper.dat filled up a small hard disk, or if a limit was set on its use with

SWAPPATH=D:\ 32767 2048. In this

case a 2048 KiB swapper.dat in D:\ could grow until the D drive had

less then 32767 KiB free space.

MemMan's default was MEMMAN=SWAP,PROTECT and its swapper policy was

lazy commit: Do not touch the swapper.dat (the hard disk

reserves of the virtual memory bank) unless it's really, really needed.

Advantages of Lazy Commit

Thanks to OS/2's magic of smartly reusing shared libraries and data and only lazily (over)committing virtual memory, an OS/2 Warp 4 system with 64 MiB of RAM and a Virtual Machine Size of 213 MiB could still be responsive with a relatively small swapper.dat of 48 MiB:

| Virtual Machine Size | 212.9 MiB | Virtual memory used |

| Free | 0.1 MiB | Pool of free physical memory |

| Amount Swapped | 9.4 MiB | Used swap |

| Applications | 48.5 MiB | Virtual memory of applications |

| Shared Storage | 74.9 MiB | Virtual memory shared by applications |

| Vdisk(s) | 0.0 MiB | Ram disk |

| Disk Cache | 6.0 MiB | Disk caches (HPFS, FAT, CD) |

| Operating System | 14.9 MiB | System memory |

This information was gathered with the memory analysis tool OS2MEMU (20memu.zip) from years ago. Today I use Theseus. Rather big programs like StarOffice, Netscape, Lotus Wordpro and Organizer were loaded as well as a Win-OS/2 session (VDM) with 64 MiB of physical memory under OS/2 Warp 4. Windows 95 and Linux could not replicate the trick.

| Process | In virtual memory (KiB) | In physical memory (KiB) | PID | Process | In virtual memory (KiB) | In physical memory (KiB) | PID |

|---|---|---|---|---|---|---|---|

| PMSHELL | 3524 | 132 | 8 | MIDIDMON | 148 | 0 | 2 |

| CNTRL | 92 | 0 | 4 | DOSCTL | 212 | 0 | 5 |

| WATCHCAT | 1072 | 0 | 7 | SDCTLAD | 124 | 0 | 6 |

| HARDERR | 108 | 0 | 9 | PMSPOOL | 1256 | 0 | 11 |

| PMSHELL | 30912 | 2320 | 13 | PMIOPL | 1464 | 68 | 20 |

| XFILE | 1252 | 20 | 22 | VDM | 9920 | 5040 | 44 |

| SOMDD | 2248 | 56 | 70 | ORG32 | 6676 | 4048 | 83 |

| NETSCAPE | 9388 | 784 | 79 | SOFFICE | 8036 | 5892 | 75 |

| WORDPRO | 13696 | 2536 | 69 | CMD | 384 | 136 | 81 |

| OS20MEMU | 6336 | 6008 | 82 | SUM | 93288 | 26908 | 89 |

Notice that PM Shell runs twice: First as Presentation Manager (Process ID 8) and later as the Workplace Shell (PID 13). The WPS used most virtual memory (30912 KiB), but only 2320 KiB was in physical memory. This was the most needed WPS code (the so-called Working Set). The not recently used memory was swapped out or was not yet committed freeing memory for other applications.

The whole StarOffice 5.1 Suite uses less virtual memory than Wordpro alone. Wordpro needed the Open32 libraries and thus had the burden of a WIN32 API library (“glue”). StarOffice on the other hand was a PM application since 1996 (v 3.1) using its Starview library to enable porting. But this cannot be said of Innotek's port of OpenOffice 1.1.5 for Windows. The OpenOffice.org “module” Writer already uses 66 MiB of physical memory just to start up. Here the native OS/2 port of OpenOffice.org 2.0 did better.

RAM Usage by Process:

--------- private -------- ------ owned shared ------

bytes KBytes MBytes bytes KBytes MBytes who

000EB000 940 0.918 0233B000 36076 35.230 SOFFICE (2.0 beta)

00BF8000 12256 11.969 0370E000 56376 55.055 SOFFICE (1.1.4)

Innotek's ODIN based middleware glued 32 bit Windows programs (Java RT, OpenOffice.org) and OS/2 together. But this glue takes its toll in terms of virtual and real memory use. And this can be a problem with the relative lack of virtual memory address space for user processes under OS/2.

The Working Set Concept

At a certain moment the 8 KiB program that was allocated 6 MiB of virtual memory, would get a time slice from the OS/2 scheduler in which it could start writing in its virtual memory. But because MemMan did nothing to make the promised memory resources available, writing the first bit would cause a page fault in the MMU. So the system was interrupted and MemMan took action to create a 4 KiB Page Frame in memory that would be added to the working set of the program.

Virtual memory again can be compared with credit money. The memory bank promises you a loan to build your house, but if you demand it all, the bank employee is in trouble. She or he can only give you some money to start with and promises you to make the rest available later.

But a protected mode program has no idea about the real memory situation. It only sees its sea of virtual memory. What has been manipulated in its virtual world between its time slices is unknown to it. When it is asking for 6 MiB of memory and it is getting only a 4 KiB chunk to start with, the program supposes that the rest of the allocated virtual memory is available too. And indeed it becomes available but only in bits and pieces as physical memory is shared with other applications.

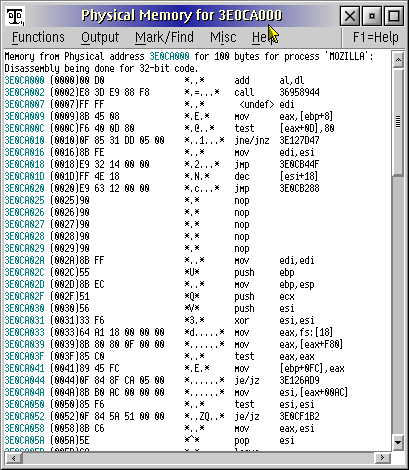

Figure 2. Disassembled code in physical memory

But even with little physical memory from Memory Manager, the program can use its time-slices and modest working set of physical memory to instruct the small registers of the processor, just as you can start building your house with only a small starter budget. You do not need the material to build the roof right away.

Even in a single-tasking environment with the fastest dual core processors you cannot run a program from beginning to end at once. Especially not when relatively slow I/O operations are involved. Programs are run by the processor step by step. The program starts somewhere instructing the processor and ends somewhere with a quit.

This is the reason why the Memory Manager does not need to do anything when a program demands 6 MiB of virtual memory for its plans. It just waits and sees. It knows that virtual memory is given in pieces of 4 KiB. And it knows that some from its free pool of memory obtained page frames (cash money), can be enough to fill the processor with the next instructions during the time slices of the process.

MEMMAN=SWAP,PROTECT,COMMIT

When the parameter COMMIT was added (MEMMAN=SWAP,PROTECT,COMMIT), the swapfile would grow more like an Unix

system because the memory banker MemMan reserves memory or disk space for any virtual memory request. If it could

not be made available, a memory error would occur. COMMIT was certainly a more solid way of banking, but it was not

fast enough for the hard disks of early personal computers.

Today we consider a 33 MHz PCI bus slow compared to a 2000 MHz CPU's clock speed, but to let a 16 MHz 32 bit Intel 386 processor deal with a slow hard disk on an 8.33 MHz 16 bit ISA bus was really a waste of time. Bus mastering (MCA) was rare. The system would slowdown terribly and the WPS and other user processes could become unstable. So OS/2 preferably only committed virtual memory when needed (lazy commit).

Nevertheless, the setting MEMMAN=SWAP,PROTECT,COMMIT could add to OS/2's stability today. With the

swapper.dat on a fast hard disk with bus mastering and with a 128 MiB or more of

JFS very lazy write cache, the Unix-like

COMMIT approach does't noticeably slow down your system. You might see a sandbox every 16 seconds when you have

IFS=C:\OS2\JFS.IFS /CACHE:128000 /LW:16,256,8 /AUTOCHECK:* in your CONFIG.SYS. But that

sync at every 16 seconds can be changed to higher values so that the cache algorithms can do their work in idle

time.

By the way the maximum size of the swapper.dat is the same as the maximal file size of HPFS: 2 GiB. But it's unlikely that you will ever need it even with COMMIT.

MEMMAN=NOSWAP,PROTECT

If you have some gigabytes of memory, the setting meant for diskless devices, MEMMAN=NOSWAP,PROTECT, is an

option.

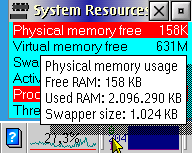

Figure 3. System Resources and the Sentinel widget providing information about virtual memory resources

Protected mode programs still use virtual memory, but the system cannot swap pages to disk. But OS/2 can keep unused pages in compressed form in memory. In fact this “in memory” swap file or buffer is also used before any swapping to disk occurs.

If you have less memory, NOSWAP is a way to study the symptoms of low virtual memory situations. Without swapper.dat OS/2 cannot do lazy commits, so “lack of memory” errors soon show up on desktop systems. Especially if you use a lot of threads. But before you do so, make sure that you can easily restore the CONFIG.SYS to its former state.

Rick Papo's System Resources provided a better indication of the free virtual memory resources than the Sentinel memory watcher of XWorkplace.

Virtual Address Space under OS/2

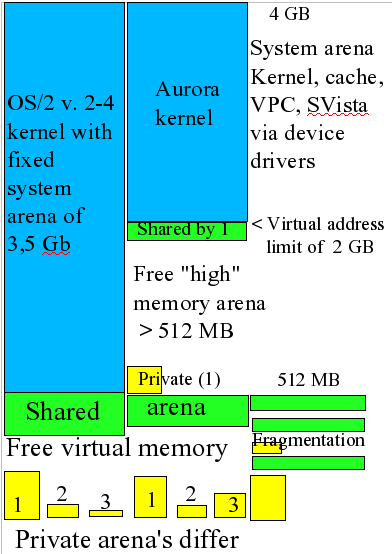

Figure 4. Virtual address space and the limits

When running in protected mode, the Intel 386(DX) processor could address 4 GiB of virtual memory per process.

32 bits OS/2 2.0 gave the first 512 MiB of this linear address space to programs. The user space was divided in a shared arena, a free pool of memory, and a private arena. The rest of the addresses went to the kernel (system arena).

The border between them became known as the virtual address limit (VAL) of protected mode user programs

The System Arena

Though the kernel can theoretically access terabytes of virtual memory (using segments), it also needs a projection in the 4 GiB virtual address space of protected mode programs. This system arena contains kernel routines, file system caches, frame buffers and memory allocated by device drivers.

PCI bus and APIC mappings in the highest region are the reason that most motherboards support only 3.5 GiB of RAM. For this reason you could compare the system arena somehow with the 640/1024 KiB BIOS memory of DOS, with the important difference that protected mode OS/2 programs can only indirectly access system resources by using protected system DLLs.

The Shared Arena

The upper part of the memory for protected mode programs used virtual address space meant for shared code and data (shared arena). Sharing of memory is essential for interprocess communication, sharing data (clipboard) and sharing a common look and feel (PM windows, WPS widgets, printer objects). Moreover, sharing DLLs and data reduces the system overhead.

The memory objects allocated by DLLs are loaded from the virtual address limit 512 MiB downwards, so that the free pool of memory is reduced. The first DLL is often DOSCALL1.DLL which contains system APIs. Following it are shared libraries like that of Presentation Manager, the WPS and network API's. Integrated program suites like Lotus SmartSuite, StarOffice and Mozilla also share their common code and data here.

In the process of loading and unloading of programs and their shared DLLs, the shared arena can become fragmented. Most memory that is deallocated leaves a hole in virtual address space. Only the memory objects that border the free pool of memory do not since the fragments can be coalesced onto larger parts. When the shared arena becomes very fragmented, it may be difficult for the OS/2 system loader to find contiguous address space for big DLLs.

The Private Arena

The lowest part of the user arena of a protected mode process contains its privately used memory addresses (private arena). The contents of the private arena can only be seen (and accessed) by the program that has the current time slice of the processor.

Remember that (on a non-SMP system) at any moment only one process has access to the processor. During its processor time its view of virtual memory is active. So when process 1 is active, yellow 2 and 3 seem to be non-existent. Process 1 can only “see” the shared parts of 2 and 3.

So when user process 1 is active, it can access its own private code and data (yellow 1) and parts of the shared data and code (green). When user process 2 is active, it can access his private code and date (yellow 2) and again parts of the shared arena. The virtual memory space in the first 512 MiB that is neither private nor shared memory (white) can be considered free, but the shared arena might be too fragmented to load large memory objects in it (see the last bar diagram).

The Free Pool of Memory

The free pool of memory between the private and shared arena can easily disappear. Its lower border is determined by the biggest process in the private arena, its upper border by the size of the shared arena. A shared arena may also grow because of fragmentation.

The OS/2 preloaded programs and system DLLs may occupy some 200-300 MiB of the 512 MiB virtual address space for user programs. This means that a new program can allocate at most 200-300 MiB of new memory addresses and the next one probably less.

If you load a program that allocates 30 MiB of virtual memory in the shared arena, the address space of any other program is reduced by 30 MiB. If it is a WPS program, other WPS programs may benefit from it, but OS/2 CLI and PM programs will probably not.

If a program allocates 50 MiB of virtual address space in the private arena only its free address space diminishes by 50 MiB.

But there is one exception: it must not be the biggest process in the private arena (process 1). Its size determines the lower border of the free arena. For this reason big Java programs using private memory can give problems when you cannot load them high (see later): Not by using real memory (MEMMAN can handle that), but by exhausting the virtual address space.

The High Memory Arenas

The Aurora kernel (OS/2 4.5x) introduced new private and shared high memory arenas (HMA) above the old 512 MiB VirtualAddressLimit (VAL). The Aurora kernel accepted VALs up to 3072 MiB with the CONFIG.SYS setting:

VIRTUALADDRESSLIMIT=3072

and let Java load code high with:

SET JAVA_HIGH_MEMORY=1

But as the system arena (the virtual projections of the kernel) are also used to run and contain the projections of caches, frame buffers and other system memory allocated by device drivers, it is unlikely that you can ever use this setting on a desktop system. At least not when you want to use a 1 GiB JFS cache and a 128 MiB video card. You simply cannot overcommit the system arena. So the default VAL of 1024 is more realistic.

Out of Memory Errors on Current OS/2 Systems

OS/2 Warp 4 enabled me to run many user programs with 64 MiB of RAM. Thanks to lazy commit, swapping of unused memory and 74 MiB of shared memory, OS/2 managed to run in only 64 MiB of RAM and the swapper.dat an overcommitted system with a Virtual Machine Size of 213 MiB.

But under-commitment also exists. It is like the situation of DOS users who got less then 640 KiB of RAM, even if they had 2 MiB of physical memory available because DOS only could only address 1024 KiB of RAM directly. It gave 384 KiB to the PC BIOS and at least 50 KiB for the kernel and drivers.

Under-commitment of OS/2 happens when you have 2 GiB of physical memory and OS/2 applications complain about “out of memory” errors when you still have 1 GiB of memory free. Most of the times the the reason is lack of usable address space in the shared arena. And this happens earlier when the high memory arena's of modern kernels are not optimally used.

You can read more about this in Using Theseus to study memory usage under OS/2. I also wrote a more practical follow-up article that will be published later: Virtual Memory Problems under OS/2.

[1] Fotheringham, J. 1961. “Dynamic storage allocation in the Atlas computer, including an automatic use of a backing store.” ACM Communications 4, 10 (October), 435-436.

[2] Kilburn, T., D. B. G. Edwards, M. J. Lanigan, and F. H. Sumner. 1962. “One-level storage system.” IRE Transactions EC-11, 2 (April), 223-235.

Feature articles

Feature articles